Hello! I’m a backend developer on the Wadiz Funding Development Team.

In this post, we’ll take a look at the the "taptap" issueand outline the troubleshooting process. Rather than offering a simple solution, I hope this serves as a useful guide for those curious about how problems are identified and resolved in real-world scenarios.

What's the problem?

Wadizhas a screen called [Manage Payment Information].

Payment Card Registration Screen

Although only one card can be registered, a bug occasionally caused duplicate registrations. We implemented a Redis distributed lock to reduce the frequency to near zero, but the root cause remained elusive. It was particularly frustrating because the issue was difficult to reproduce. Determined to find the exact source, I set out on a quest! 🏃♀️🏃♂️

Successful reproduction in the development environment!

Rather than viewing the so-called "taptap" as a simple user action, that the API was called multiple times. This is especially true in situations developers didn’t anticipate, such as extremely high speeds. After trying various things, I found the following case in Chrome DevTools. If you look at the last two lines, you can see that the API in question was called multiple times.

[XHR] 'POST: /.../../get'

[XHR] 'POST: /.../../add'

[XHR] 'POST: /.../../add'

Is the problem that it's being called twice from the client?

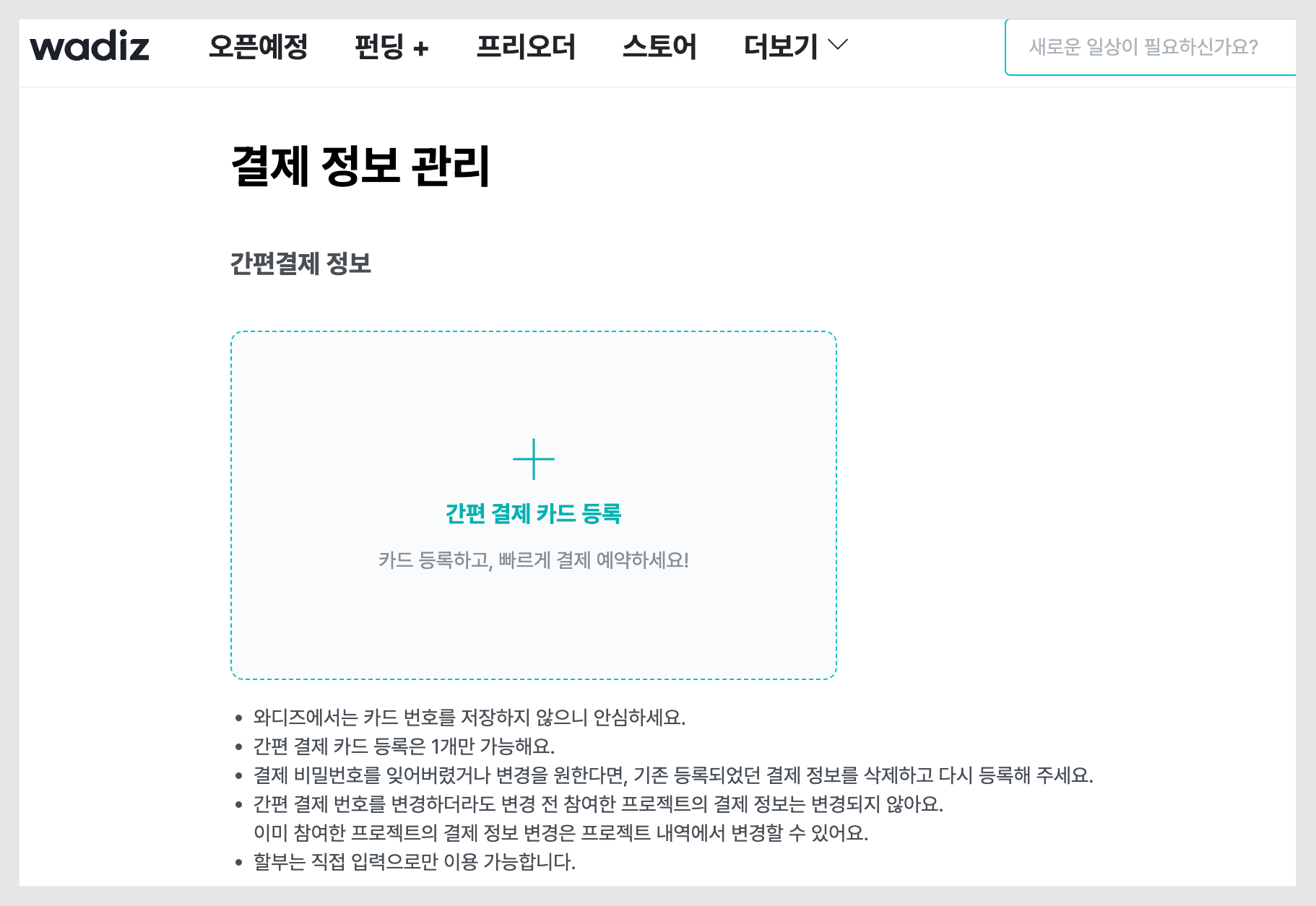

Normally, we would work with a front-end developer to limit the button to a single click. However, that’s not feasible at the moment. The reason is: ‘Are there any relevant policies in the requirements?’ Defining user behavior is both a requirement and a policy. Below is the card registration API flowchart.

flowchart

In other words, the policy here is that no matter how many times the user makes a request, the server automatically filters the results so that only one card is displayed. The fact that the client makes multiple requests is not an issue at all. Therefore, this issue should be viewed as a problem originating on the server side. The server.

There must be something unusual about it.

So, how should the server have worked? According to the policy, only one card should be held, so if two cards are received, one should be deleted. However, instead of deleting it, the request was accepted as-is and the card was registered anew. Here, we can redefine the issue: ‘The server’s defense logic does not function in certain situations.’

This isn’t something that happens every time the function is called; it only occurs under certain specific conditions, so now we need to identify those conditions.

There are currently three or more instances of duplication

What's unusual is that whenever the duplicates pile up, the screen always freezes.Screen freezing’ occurs.

Card Registration Screen

When I clicked the [Next] button on the screen above, the screen should have advanced, but it froze. What’s more, the developer tools didn’t even log any API calls. This turned out to be a huge clue!

If the Chrome browser hits a timeout after making an API call, it automatically retries the request. In other words, it waits for a response until the timeout occurs and logs the result in the developer tools. However, if no log appears, it means the server is not responding.

Why is the server still holding onto the request? The logic was implemented according to the policy, so when duplicate requests arrived, it should have filtered them to accept only one and discarded the other. Based on the log below, the culprit definitely points to the server.

2023-12-28 13:45:56.081 [wadiz] Call started

...(생략)

2023-12-28 13:46:27.408 [wadiz] Call finished successfully

Why can't the server filter it?

If you receive one request and then a second request, the card created during the first request must be deleted. If this did not happen, it means there was concurrent access. And as mentioned in the introduction to this article, measures to control concurrent access have already been implemented.

It probably isn't a Redis distributed lock issue...! Because Redis operates on a single thread, it can help manage concurrency, and it is often used for distributed locking in distributed system environments through clustering. Moreover, this is a well-known method, and its effectiveness has already been proven in many cases.

"Could the most trustworthy person actually be the problem? The moment I stopped believing that wasn't the case, total chaos ensued. That's why I started investigating frantically."

1) Is it because I didn't set a pessimistic lock? NO

Optimistic locking assumes that "there won't be any problems" and applies flexible locking, whereas pessimistic locking assumes that "this is bound to cause problems" and applies locking from the very beginning.

Even if Redis functions properly, I wondered: if the moment a thread relinquishes control and the moment another thread acquires control happen to coincide perfectly, wouldn’t accidental concurrency still be possible even with locks in place?

I considered setting a pessimistic lock to block access right from the entrance, but I decided against it. This is because a pessimistic lock sets concurrency control to the highest level, which creates a trade-off with the risk of overloading the system. I didn’t dare to predict what side effects this decision might have. I suppose I’ll have to keep it as a last resort.

2) Why doesn't MyBatis throw an error? NO

I also noticed something strange in the source code.

빌키접근클래스.selectOne(userId);This is the logic for retrieving a single record. Since a duplicate was found, SelectOne() should have thrown an error.There was some MyBatis code left in the project where the issue occurred. To figure out why, I started digging into the implementation.

public <T> T selectOne(String statement, Object parameter) {

List<T> list = this.selectList(statement, parameter);

if (list.size() == 1) {

return list.get(0);

} else if (list.size() > 1) {

throw new TooManyResultsException("Expected one result (or null) to be returned by selectOne(), but found: " + list.size());

} else {

return null;

}

}

Although it’s called `selectOne()` at the top level, it actually behaves as `selectList` internally. You can also see that it should throw an exception if `size` is greater than 1.

However, no exception was thrown. I was able to find the reason for this in the query.

SELECT 조회할 데이터

FROM A테이블 ...

LEFT JOIN ...

WHERE ...

ORDER BY A테이블.Registered

LIMIT 1;For some reason, I had been filtering the list using ORDER BY and LIMIT! I found the reason behind this thanks to a teammate’s advice. Do you remember that legacy project I mentioned earlier? I realized that I had actually written logic to perform ORDER BY and LIMIT back at my previous company. Because of the nature of MyBatis, I hadn’t been able to find it without the help of an IDE. (ORM is the best…)

I looked into several other areas as well, but didn’t find any major clues. However, I was at least able to come up with one potential solution. If I modify the code to reverse the sorting order in the ORDER BY clause, I can implement a deletion logic even if the data is duplicated.

But the purpose of this journey is prevention! It’s not about removing duplicate data—it’s about preventing duplicates from occurring in the first place. So why can’t the server filter them out? That question remains unanswered.

We've found the culprit.

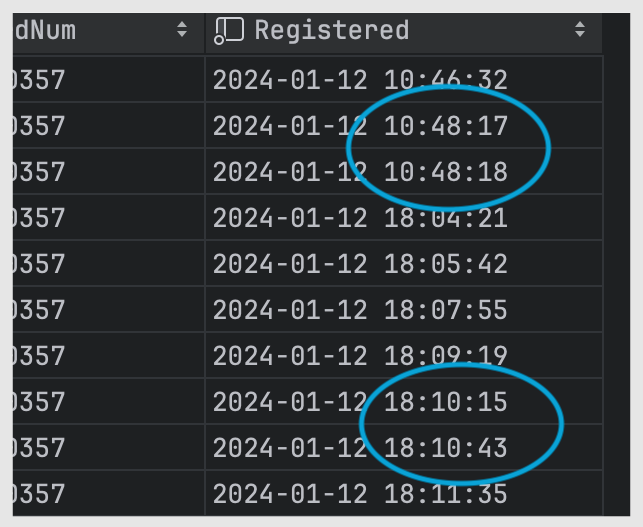

Please take a look at the logs below, noting the times!

First log

[17:46:03.797][http-bio-8080-exec-2] INFO - delete() | userID = 100, targetSeq = 887

[17:46:03.797][http-bio-8080-exec-2] INFO - delete() | delete success

Second log

[17:46:30.791][http-bio-8080-exec-9] INFO - delete() | userID = 100, targetSeq = 887

[17:46:30.791][http-bio-8080-exec-9] INFO - delete() | delete success

Third Log

[17:46:57.931][http-bio-8080-exec-5] INFO - delete() | userID = 100, targetSeq = 887

[17:46:57.931][http-bio-8080-exec-5] INFO - delete() | delete success

Currently, number 887 is the latest entry in the database. If a new card is registered, number 887 will be deleted, and the new card will be inserted as number 888. Requests at 03 seconds, 30 seconds, and 57 seconds all attempt to delete number 887. In other words, it’s a situation where everyone is claiming, “I’m the real No. 888.”

Why did this race condition occur? This naturally leads to the suspicion: "Could Redis actually be malfunctioning?"

Let's take a closer look at Redis.

Before we dive in, I’ll briefly explain the basics of Redis and how to use it to prevent “tack” issues.

1) Redis Basics

Redis runs as a single-threaded application. Therefore, If you set up a single Redis node, you won’t encounter concurrency issues. Setting a specific value on a resource can block access from other threads. This is referred to as a "lock." In other words, Redis does not actually provide a built-in locking mechanism; rather, users (developers) create locks by leveraging Redis's features.

setnx(key, value) // set if not existIf there is no value corresponding to the current key, configure the system to add a value using the method described above. To delete a value based on specific conditions (Note) or have it automatically expire after a certain period, configure the system as shown below.

if(...) {

del(key) // or expire

}However, this approach can also create a single point of failure (SPOF) because it relies on a single node. This requires a contingency plan in case the node goes down, which leads to the use of distributed locks.

2) Using Redis (Distributed Lock)

The simplest way to address a single point of failure (SPOF) is to implement master-slave replication. This allows you to apply locks even in a distributed environment. However, there is an issue here as well. If a problem occurs with the master, it might seem that the slave would take over to resolve the issue, but since Redis replication operates asynchronously, it can lead to race conditions. Therefore, even with clustering, the possibility of falling into a race condition remains. For example:

- A sends a request and acquires a lock on the Master.

- The master goes down before data is transferred to the slave.

- The slave is being promoted to master.

- A request from B arrives, and it acquires a lock on the same resource as A.

Now, Redis has proposed an algorithm called RedLock. It is an algorithm designed to improve the performance of existing distributed locking algorithms. Although it modifies several factors—such as the time units for lock acquisition and expiration, as well as the criteria for winning lock contention—it is not without its flaws.

3) A Deep Dive into Redis

After thoroughly reviewing everything from the cluster configuration file (.conf) to whether there were any differences between the various profile environments, I didn’t find anything that seemed to be the cause of the problem.

The moment my assumption about the culprit turned out to be wrong! Fortunately, I found a crucial clue. I mentioned RedLock earlier. Among the details regarding RedLock’s limitations, the section on Network Delayseemed particularly suspicious.

It’s darkest under the lamp. Back to Basics

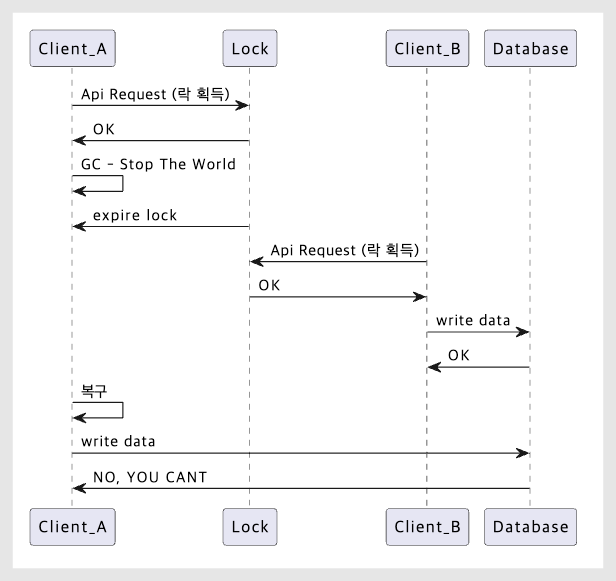

If there is an operation that involves the garbage collector and requires a lock, the following issue may occur.

- A acquires a distributed lock.

- The application suddenly stops, and the distributed lock expires. (This occurs when the service—not Redis—has gone down.)

- B acquires a distributed lock on the same resource.

- Application A is restored and resumes operation.

- Concurrency issue detected 💥

Depict the situation above as a sequence of events

"The GC cycle is so short—how is that even possible?!" Apparently, it is possible. In fact, In the case of HBase, they encountered this issue and subsequently provided guidelines for GC tuning. Furthermore, it’s said that similar situations can occur not just due to GC-induced “Stop the World” events, but also due to network latency.

My first thought was that the cause was "network latency." But while I was investigating that, I ended up back where I started. When I looked over the entire code again, I noticed some logic I had overlooked. (As the saying goes, "Back to basics.")

The foundation of good object-oriented design is high cohesion and low coupling. To give a well-known example, imagine you need to replace a car seat, but you have to remove the steering wheel as well. If the steering wheel is tightly coupled to the seat, whatever happens in the future, they will remain dependent on each other.

Please take a close look at the business logic in question.

...

유저_정보_가져오기();

유저_정보_검증하기();

...

외부사에_결제키_요청하기();

기타_오류_검증하기();

...

저장하기();If you list them based on the question “What role does it play?” without having to look at the entire code, you’ll get something similar to what’s shown above. In the middle, “request_payment_key_from_external_company()” method in the middle. Furthermore, since the request is being sent synchronously, it was immediately apparent that the coupling was extremely high. By testing the issue from this perspective, I was able to gather concrete evidence.

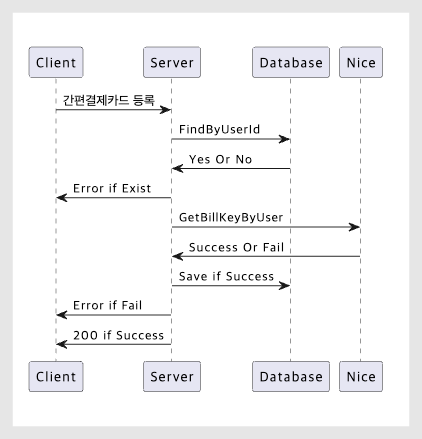

The time when the data was saved to MySQL

In the first instance, the duplication occurred within one second, while in the second instance, it took about 30 seconds. This means the delay could potentially exceed 30 seconds. Such delays do not occur on our internal network. It could mean we are waiting for a response from an external source.

Next, I check the logs again.

[10:22:28.983][http-bio-8080-exec-7] INFO - ClientConnector.request() | request

[10:22:28.985][http-bio-8080-exec-7] INFO - ClientConnector.request() | response

[10:22:28.986][http-bio-8080-exec-7] INFO - ClientConnector.request() | endIn a successful scenario, the entire process—from the communication request to the response and connection termination—is completed within one second. However, the situation was different when duplicate keys occurred.

[10:23:25.832][http-bio-8080-exec-7] INFO - ClientConnector.request() | request

...

[10:23:38.863][http-bio-8080-exec-7] INFO - ClientConnector.request() | response

[10:23:38.865][http-bio-8080-exec-7] INFO - ClientConnector.request() | endIt took about 13 seconds to receive a response, and the connection was quickly terminated.

There were times when the wait was even longer.

[10:24:19.498][http-bio-8080-exec-7] INFO - ClientConnector.request() | request

[10:24:42.510][http-bio-8080-exec-4] DEBUG - AbstractHandlerMethodMapping...I can't see where the response went—it takes about 30 seconds to show up. It's processing a request from another thread.

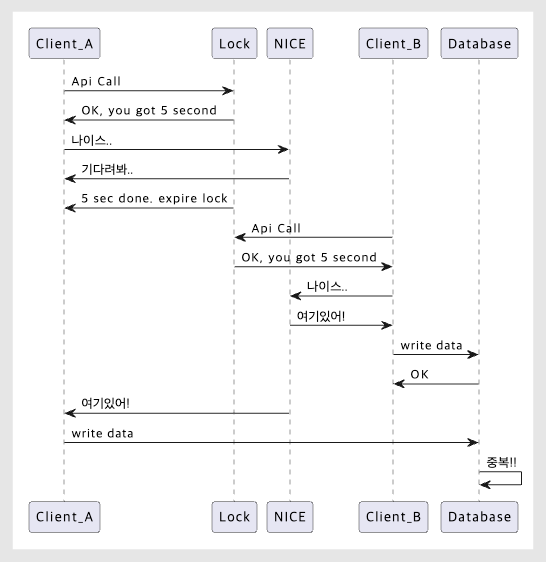

We caught the culprit, but there are still some unanswered questions. If network delays caused by reliance on external services were the cause, why didn’t this happen with Redis?

Duplicate communications

Previously, we set the expiration time for the Redis distributed lock to 5 seconds. So, as shown in the API flow above, if the response time from the external provider exceeded 5 seconds (the default), the next request could acquire the lock even though the current process hadn’t finished yet. From Redis’s perspective, this wasn’t a problem.

(You might be wondering why the table isn’t blocked. During the initial normalization process, no separate keys were set on that table, so the table simply acts as a storage layer, saving data as it is received.)

Ultimately, The cause of the duplicate saves was. I really had to search high and low to find such a simple cause. 😂

Here's how we improved it!

There were several options worth trying. We could have placed locks on the tables where data is stored, or improved accuracy by implementing a system like Zookeeper. Alternatively, we could have added fencing tokens to Redis to perform additional consistency checks. We could also have switched to an asynchronous calling method to reduce coupling. To sum it up we tried to improve the system by increasing the lock duration in Redis.

I narrowed down the options using the process of elimination! I wanted to avoid altering the table structure as much as possible, and introducing a new system would have required us to factor in the learning curve. Since this is a legacy project, it was difficult to gauge the scope of potential side effects from changing how Redis operates. Additionally, because the business logic required immediate responses, switching to an asynchronous approach was also challenging.

Fortunately, this feature doesn’t generate excessive traffic. We were able to choose to increase the lock time because we determined it wouldn’t cause an overload. As a result, when we recently increased the lock time to the new standard, not a single duplicate occurred.

In closing

How did you find the troubleshooting process? In an era where there are so many convenient tools available, we found the solution by going back to the basics. From the emergence of various technologies to misplaced suspicions and rational decisions, I think we’ve shown you the full range of a developer’s emotions. Now that a month has passed since I joined the company, I feel a sense of pride knowing that I’ve been able to contribute, even in a small way, to the team and the organization.

It took some time because I was still unfamiliar with the service architecture, but thanks to my colleagues who worked alongside me, we were able to reach a conclusion. Troubleshooting in the real world isn’t much different; in the end, it’s a battle of persistence. Thank you!

👉 Want to learn more aboutWadiz Backend Developers and Business Development Team? (Read the interview)

👉 Wadizis hiring Java backend developers!